KB = 1024

MB = 1024 * KB

chunksizes = [4 * KB, MB, 10 * MB, 100 * MB]

iterations = [100, 100, 100, 10]

handles = {

('pyarrow', 'libhdfs'): hdfs.open(path),

('pyarrow', 'libhdfs3'): hdfscpp.open(path),

('hdfs3', 'libhdfs3'): hdfs3_fs.open(path, 'rb')

}

timings = []

for (library, driver), handle in handles.items():

for chunksize, niter in zip(chunksizes, iterations):

tester = make_test_func(handle, chunksize)

timing = ensemble_average(tester, niter=niter)

throughput = chunksize / timing

result = (library, driver, chunksize, timing, throughput)

print(result)

timings.append(result)

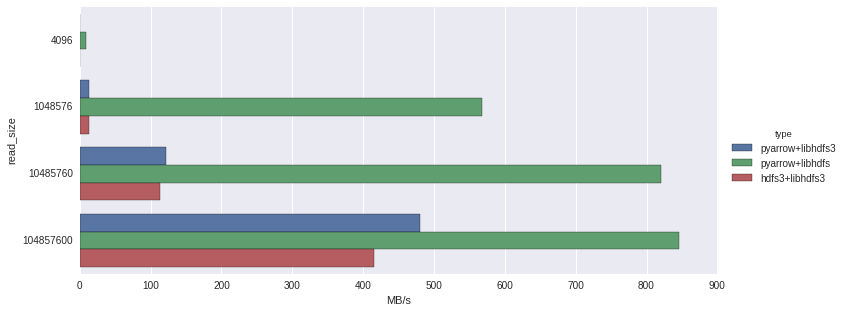

('pyarrow', 'libhdfs3', 4096, 0.07757587, 52799.923481360885)

('pyarrow', 'libhdfs3', 1048576, 0.07873925999999982, 13317066.987929558)

('pyarrow', 'libhdfs3', 10485760, 0.08238820000000033, 127272594.86188506)

('pyarrow', 'libhdfs3', 104857600, 0.20835439999999608, 503265589.7835705)

('pyarrow', 'libhdfs', 4096, 0.0004367300000001251, 9378792.388887474)

('pyarrow', 'libhdfs', 1048576, 0.0017632700000001478, 594676935.4664414)

('pyarrow', 'libhdfs', 10485760, 0.01217960000000005, 860928109.2975103)

('pyarrow', 'libhdfs', 104857600, 0.11822549999999979, 886928792.8577183)

('hdfs3', 'libhdfs3', 4096, 0.07886105999999984, 51939.44894983669)

('hdfs3', 'libhdfs3', 1048576, 0.07959901000000003, 13173229.164533574)

('hdfs3', 'libhdfs3', 10485760, 0.08876361000000031, 118131292.76738478)

('hdfs3', 'libhdfs3', 104857600, 0.2404344999999978, 436117112.9767191)